Why Programmers Should(n't) Learn Theory

I’m currently taking my 5-person advanced coaching group on a month-long study of objects. It turns out that, even though things called “objects” are ubiquitous in modern programming languages, true objects are quite different from the popular understanding, and it requires quite a bit of theory to understand how to recognize true objects and when they are useful. As our lessons take this theoretical turn, one asks me “what difference will this make?”

I recently hit my five-year anniversary of teaching professional software engineers, and now is a great time to reflect on the role that theoretical topics have played in my work, and whether I’d recommend someone looking to become the arch-engineer of engineers should include in their path steps I’ve taken in developing my own niche of expertise.

I’ve sometimes described my work as being a translator of theory, turning insights from research into actionable advice from engineers. So I’ve clearly benefited from it myself. And yet I spend a lot of time telling engineers not to study theory, or that it will be too much work for the benefit, or that there are no good books available.

Parts of it are useful sources of software-engineering insight, parts are not. Parts give nourishment immediately; parts are rabbit holes. And some appear to have no relevance to practical engineering until someone invents a new technique based on it.

I now finally write up my thoughts: how should someone seeking to improve their software engineering approach learning theory?

What is theory?

“If I were a space engineer looking for a mathematician to help me send a rocket into space, I would choose a problem solver. But if I were looking for a mathematician to give a good education to my child, I would unhesitatingly prefer a theorizer.”

— Gian-Carlo Rota, “Problem Solvers and Theorizers”

“Theory” in software development to me principally means the disciplines studied by academic researchers in programming languages and formal methods. It includes type theory, program analysis, program synthesis, formal verification, and some parts of applied category theory.

Some people have different definitions. There’s a group called SEMAT, for instance, that seeks to develop a “theory” of software engineering, which apparently means something like writing a program that generates different variants of Agile processes. I don’t really understand what they’ve been up to, and never hear anyone talking about them.

What about everything else which is important when writing software? UX design has a theory. Distributed systems have a theory. Heck, many books about interpersonal topics can be called “theory.”

Well, I’ve stated what I think of when I hear “software engineering” and “theory” together. Plenty of people disagree. You can call me narrow-minded. More importantly though, I’m only writing about things that I’m an expert in by the standards of industry programmers. If you read a couple of textbooks about any of those other subjects, you’ll probably know more than I do.

One more preliminary: I’ve named 5 different subfields, but the boundary between them gets very blurry. There is a litmus test for whether a topic is part of category theory, namely whether it deals with mathematical objects named “categories,” but, otherwise, most specific topics are studied and used in multiple disciplines, and the boundaries between them are defined as much by history and the social ties of researchers as by actual technical differences.

So, for many things, if you ask “What about topic X,” I could say “X is a part of type theory,” but what I’d really mean is “The theoretical aspects of X are likely to show up in a paper or book that labels itself ‘type theory.’”

In the remainder of this piece, I’ll discuss these 5 disciplines of programming languages and formal methods, and discuss how each of them does or does not provide useful lessons for the informal engineer.

Type Theory

“Type theory” can roughly be described as the study of small/toy programming languages (called “calculi”) designed to explore some kind of programming language feature. Usually, those features come with types that constrain how they are used, and, occasionally, those types have some deep mathematical significance, hence the name “type theory.” It can be quite deep indeed — did you know that “goto” statements are connected to Aristotle’s law of the excluded middle? Outside of things like that though, type theory is not about the types.

Studying type theory is the purest way to understand programming language features, which are often implemented in industrial languages in a much more complicated form. And, for this reason, studying type theory is quite useful.

To the uninitiated, programming is a Wild West of endless possibilities. If your normal bag of tricks doesn’t work, you can try monkey-patching in a dynamic proxy to generate a metaclass wildcard. To the initiated, the cacophony of options offered by the word-salad in the previous sentence boils down to a very limited menu: “this is just a Ruby idiom for doing dynamic scope.”

So many thorny software engineering questions become clear when one simply thinks about how to express the problem in one of these small calculi. I think learning how to translate problems and solutions into type theory should be a core skill of any senior developer. Instead of looking at the industry and seeing a churning sea of novelty, one sees but a polishing of old wisdom. Learning type theory, more than any other subfield of programming languages, fulfills the spirit of the Gian-Carlo Rota quote above, that one should pick a theorizer for a good education.

Perhaps the most profound software-engineering lesson of type theory comes from understanding existential types. They are utterly alien to one only familiar with industrial languages. Yet they formalize “abstraction boundaries,” and the differences between different ways of abstraction such as objects vs. modules are best explained by their different encodings into existential types. In fact, I will claim, however, that it is quite difficult (and perhaps impossible, depending on your standards) to fully grasp an abstraction boundary without understanding how to translate it into type theory. Vague questions like “is it okay to use this knowledge here?” become crisp when one imagines an existential package bounding the scope.

Type theory helps greatly in understanding many principles of software design. I’ve found many times I can teach some idea in a few minutes to an academic that takes me over an hour to teach a layprogrammer. But it is by no means inevitable that picking up a type theory book will lead to massive improvement without also studying how to tie it to everyday programming. It’s easy to read over the equality rule for mutable references, for instance, and regard it as a curiosity of symbolic manipulation. And yet I used it as the kernel of a 2,500-word lesson on data modeling. The ideas are in the books, but the connections are not. And thus, indeed, I’ve encountered plenty of terrible code written by theoretical experts.

Program Analysis

Program analysis means using some tool to automatically infer properties of a program in order to e.g.: find bugs. It can be broadly split into techniques that run the code (dynamic analysis) vs. those that inspect it without running it (static analysis); both of these have many sub-families. For example, a dynamic deadlock detector might run the program and inspect what order it acquires locks, and conclude that a program does not follow sound lock-discipline and is thus in danger of deadlocking. (This is different from testing, which may be unable to discover a deadlock without being exceptionally (un)lucky in getting the right timings.) In contrast, a static analyzer would trace through all possible paths in the source code to discover all possible lock orderings. I’ll focus on static analysis for the rest of this section, where most of the theory lies.

First, something to get out of the way: Static analysis has become a bit of a buzzword in industry, where it’s used to describe a smorgasbord of tools that run superficial checks on a program. To researchers, while the term “static analysis” technically describes everything that can be done to a program without running it, it is typically used to describe a family of techniques that, loosely speaking, involve stepping through a program and tracking how it affects some abstract notion of intermediate state, a bit like how humans trace through a program. Industrial bug-finding tools such as Coverity, CodeQL, FindBugs, and the Clang static analyzer all include this more sophisticated kind of static analysis, though they all also mix in some more superficial-but-valuable checks as well. I refer to this excellent article by Matt Might as a beginner-level intro.

This deeper kind of static analysis is done by a number of techniques which have names such as dataflow analysis, abstract interpretation, and effect systems. The lines between the approaches get blurrier the deeper you go, and I’ve concluded that the distinctions between them often boil down to little things such as “constraints about the values of variables cannot affect the order of statements” (dataflow vs. constraint-based analysis) and “the only way to merge information from multiple branches (e.g.: describing the state of a program after running a conditional) is to consider the set containing both” (model-checking vs. dataflow analysis).

Is studying static analysis useful for understanding software engineering? I’ve changed my mind on this recently.

I think the formal definition of an abstraction as seen in static analysis, specifically the subfield called “abstract interpretation,” is useful for any engineer to know. Sibling definitions of abstraction also appear in other disciplines as well such as verification, but one cannot study static analysis without understanding it.

Beyond that, however, I cannot recall any instance where I’ve used any concept from static analysis in a lesson about software engineering.

Static analysis today is focused on tracking simple properties of programs, such as whether two variables may reference the same underlying object (pointer/alias analysis) or whether some expression is within the bounds of an array (covered by “polyhedral analysis” and its simplified forms). When more complex properties are tracked, it is typically centered around usage of some framework or library (e.g.: tracking whether files are opened/closed, tracking the dimensions of different tensors in a TensorFlow program) or even tailored to a specific program (example). Practical deployments of static analysis are a balancing act in finding problems which are important enough to merit building a tool, difficult enough to need one, and shallow enough to be amenable to automated tracking.

A common view is that, to get a static analyzer to track the deeper properties of a program that humans care about, one must simply take existing techniques and just add more effort. As my research is on making tools easier to build, I recently spent weeks thinking about specific examples of which such deeper properties could be tracked upon magically conjuring more effort, and concluded that, on the contrary, building such a “human-level analyzer” is well beyond present technology.

Static analysis is the science of how to step through a program and track what it is doing. Unfortunately, the science only extends to tracking shallow properties. But there is plenty of work tracking more complex properties: it’s done in mechanized formal verification.

Program Synthesis

Program synthesis is exactly what it says on the tin: programs that write programs. I gave an entire talk on software engineering lessons to be drawn from synthesis. There is much inspiration to take from the ideas of programming by refinement and of constraining the search space of programs.

But, that doesn’t mean you should go off and learn the latest and greatest in synthesis research.

First, while all the ideas in the talk are used in synthesis, many of the big lessons are from topics that do not uniquely belong to synthesis. The lessons about abstraction boundaries, for instance, really come from the intersection of type theory and verification.

Second, the majority of that talk is about derivational synthesis, the most human-like of the approaches. Most of the action these days is in the other schools: constraint-based, enumerative, and neural synthesis. All of these are distinctly un-human-like in their operation — well, maybe not for neural, but no-one understands what those neural nets are doing anyway. There are nonetheless software-engineering insights to be had from studying these schools as well, such as seeing how different design constraints affect the number of allowed programs, but if you spend time reading a paper titled “Automated Synthesis of Verified Firewalls,” to use a random recent example, you’re unlikely to get insight into any aspect of software engineering other than how to configure firewalls. (But if that’s what you’re doing, then go ahead. Domain-specific synthesizers do usually involve deep insights about the domain.)

Formal Verification

Formal verification means using a tool to rigorously prove some property (usually correctness) of a program, protocol, or other system. It can broadly be split into two categories: mechanized and automated. In mechanized verification/theorem-proving, engineers attempt to prove high-level statements about a program’s properties by typing commands into an interactive “proof assistant” such as Coq, Isabelle, or Lean. In automated verification, they instead pose a query to the tool, which returns an answer after much computation. Both require extensive expertise to model a program and its properties.

Mechanized verification can provide deep insights about everyday software engineering. For instance, one criteria for choosing test inputs in unit testing is “one input per distinct case,” and doing mechanized verification teaches one what exactly is a “case.” Its downside: in my personal experience, mechanized verification outclasses even addictive video games in its ability to make hours disappear. As much as programming can suck people into a state of flow and consume evenings, doing proofs in Coq takes this to another level. There’s something incredibly addicting about having a computer tell you every few seconds that your next tiny step of a proof is valid. Coq is unfortunately also full of gotchas that are hard to learn about without expert guidance. I remember a classmate once made a subtle mistake early on in a proof, and then spent 10 hours working on this dead end.

I do frequently draw on concepts from this kind of verification. Most notably, I teach students Hoare logic, the simplest technique for proving facts about imperative programs. But I do it mostly from the perspective of showing that it’s possible to rigorously think about the flow of assumptions and guarantees in a program. I tell students to handwave after the first week in lieu of finding an encoding of “The system has been initialized” in formal logic, and even leave off the topic of loop invariants, which are harder than the rest of the logic. Alas, this means students lose the experience of watching a machine show them exactly how rule-based and mechanical this kind of reasoning is.

Automated tools take many forms. Three categories are solvers such as Z3, model-checkers such as TLA+ and CBMC, and languages/tools that automatically verify program contracts such as Dafny.

I have argued before that software design is largely about hidden concepts underlying a program that have a many-many relationship with the actual code, and therefore are not directly derivable from the code. It follows that the push-button tools are limited in the depth of properties they can discuss, and therefore also in their relevance to actual design. Of these, the least push-button is TLA+, which I’ve already written about, concluding there were only a couple things with generalizable software design insights, though just learning to write abstracted models can be useful as a thinking exercise.

In short, learning mechanized verification can be quite deep and insightful, but is also a rabbit hole. Automated verification tools are easier to use, but are less deep in the questions they can ask, and less insightful unless one is actually trying to verify a program.

I’ve thought about trying to create a “pedagogical theorem prover” in which programmers can try to prove statements like “This function is never called unless the system has been initialized” without having to give a complicated logical formula describing how “being initialized” corresponds to an exact setting of bits. Until then, I’m still on the lookout for instruction materials that will provide a nice on-ramp to these insights without spending weeks learning about Coq’s difference between propositions and types.

Category Theory

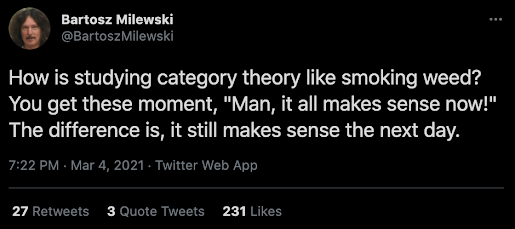

Category theory is a branch of mathematics which attempts to find commonalities between many other branches of mathematics by turning concepts from disparate fields into common objects called categories, which are essentially graphs with a composition rule. In the last decade, category theory in programming has escaped the ivory tower and become a buzzword, to the point where a talk titled “Categories for the Working Hacker” can receive tens of thousands of views. And at MIT last year, David Spivak taught a 1-month category theory course and had over 100 people show up, primarily undergrads but also several local software engineers.

I studied a lot of category theory 8 years ago, but have only found it somewhat useful, even though my research in generic programming touches a lot of topics heavily steeped in category theory. The chief place it comes up when teaching software engineering is a unit on turning programs into equivalent alternatives, such as why a function with a boolean parameter is equivalent to two functions, and how the laws justifying this look identical to rules taught in high school algebra. The reason for this comes from category theory (functions are “exponentials,” booleans are a “sum”), but it doesn’t take category theory to understand.

Lately, I’ve soured on category theory as a useful topic of study, and I became fully disillusioned last year after studying game semantics. Game semantics are a way of defining the meaning of logical statements. Imagine trying to prove a universally-quantified statement like “Scruffles is the cutest dog in the world.” Traditional model-based semantics would say this statement is true if, for all dogs in the world, that dog is either Scruffles or is less cute than Scruffles. Game semantics casts this as a game between you (the “Verifier”) and another party (the “Falsifier”). It goes like this: They present you another dog. If you can show the dog is less cute than Scruffles (or rather, they are unable to find a dog for which you cannot do so), then the statement is true and you win. Otherwise, the statement is false.

(I admit that having this kind of conversation has been my main application of game semantics thus far.)

I started reading the original game semantics paper. The first few sections explained pretty much what I just told you in semi-rigorous terms, and were enlightening. The next section gave category-theoretic definitions of the key concepts. I found this section extremely hard to follow; it would have been easier had they laid it out directly rather than shoehorning it into a category. And while a chief benefit of category theory is the use of universal constructions that allow insights to be transported across disciplines, the definitions here were far too specialized for that to be plausible.

There’s a style of programming called point-free programming which involves coding without variables. So, instead of writing an absolute value function as if x > 0 then x else -x, you write it as sqrt . square, where the . operator is function composition. Category theory is like doing everything in the point-free style. It can sometimes lead to beautifully short definitions that enable a lot of insight, but it can also serve to obscure needlessly.

I’m still open to the idea that there may be a lot of potential in learning category theory. David Spivak told me “A lot of what people do in databases is really Kan extensions” and said his collaborators were able to create an extremely powerful database engine in a measly 5000 lines of Java. Someday, I’ll read his book and find out. Until then, I don’t recommend programmers study category theory unless they like learning math for its own sake.

Conclusion

So, here’s the overall tally of fields and their usefulness in terms of lessons for software design:

- Type theory: Very useful

- Program Analysis: Not useful, other than the definition of “abstraction.”

- Program Synthesis: Old-school derivational synthesis is useful; modern approaches less so.

- Formal Verification: Mechanized verification is very useful; automated not so much.

- Category theory: Not useful, except a small subset which requires no category theory to explain.

So, we have a win for type theory, a win for the part of verification that intersects type theory (by dealing with types so fancy that they become theorems), and a wash for everything else. So, go type theory, I guess.

In conclusion, you can improve your software engineering skills a lot by studying theoretical topics. But most of the benefit comes from the disciplines that study how programs are constructed, not those that focus on how to build tools.

Appendix: Learning Type Theory

Some of you will read the above and think "Great," and then rush to Amazon to order a type theory book.

I unfortunately cannot endorse that, namely because I can't endorse reading textbooks as a good way to learn type theory.

The two main intro type theory textbooks are Practical Foundations for Programming Languages (PFPL) and Types and Programming Languages (TAPL). I'm more familiar with PFPL, but I regard them as pretty similar. Basically, both present the subject as pretty dry, consisting of a list of rules, although they try to add excitement by discussing their significance. The rules have a way of coming alive when you try to come up with them yourself, or come up with rules for a variant of an idea found in a specific programming language. This is how I tutor my undergrads, but it's hard to communicate in book format.

So, what's the right way to learn type theory on your own? I don't have one. The best I can share right now is the general advice that following a course website that uses a book is often better than using the book itself. The best way for practitioners to learn type theory has yet to be built.